Transcription

PATTERN RECOGNITIONJIN YOUNG CHOIECE, SEOUL NATIONAL UNIVERSITY

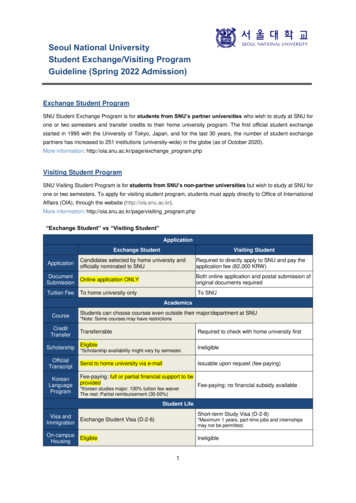

NoticeLecture Notes: SlidesReferences:Pattern Classification by Richard O. Duda, et al.Pattern Recognition and Machine Learning by CristopherM. BishopAssistant: 박슬기, 133(ASRI)-412, seulki.park@snu.ac.krEvaluation: Quiz 40%, Midterm 30%, Final 30%,Video: Every week two videos are uploaded.Video 1: upload: Sun. 09:00, Quiz Due: Tue. 24:00Video 2: upload: Wed. 09:00, Quiz Due: Fri. 24:00Class web: etl.snu.ac.krOffice: 133(ASRI)-406, jychoi@snu.ac.kr

INTRODUCTION TOAI: ARTIFICIAL INTELLIGENCEML: MACHINE LEARNINGDL: DEEP LEARNINGJIN YOUNG CHOIECE, SEOUL NATIONAL UNIVERSITY

Artificial n TheoryStatistical Machine LearningData baseSearch, InferenceDecision TreeSymbolismCognitive scienceMinskyBackpropagation RuleDeep LearningNeural NetworksConnectionismNeuroscienceRosenblatt

Artificial IntelligenceLearning from Experience (Observations, Examples)?Inference(Reasoning) for a Question?

Artificial IntelligenceLearning from Experience (Observations, Examples)If birds are given, then we can learn their features such as # of legs, shape of mouth, etc.If cancer patients are given, then we can observe their symptoms via diagnosis .Inference(Reasoning) for a QuestionIf features of something are given, then we can recognize what is it.If symptoms of a patient are given, then we can infer what is his decease. .

Artificial IntelligenceLearning from Experience (Observations, Examples)If birds are given, then we can learn their features such as # of legs, shape of mouth, etc.If cancer patients are given, then we can record their symptoms via diagnosisIf 𝑦 𝑦1 , then 𝑥 𝑥1𝑦𝑥If 𝑦 𝑦2 , then 𝑥 𝑥2𝑦1 𝑥11 𝑥12 𝑥13 𝑥14 𝑥15 𝑥16DB𝑦2 𝑥21 𝑥22 𝑥23 𝑥24 𝑥25 𝑥26 Decision TreeInference(Reasoning) for a QuestionIf features of something are given, then we can recognize what is it.If symptoms of a patient are given, then we can infer what is his decease.If 𝑥 𝑥1 , then 𝑦 𝑦1𝑦𝑥If 𝑥 𝑥2 , then 𝑦 𝑦2𝑦1 𝑥11 𝑥12 𝑥13 𝑥14 𝑥15 𝑥16𝑦2 𝑥21 𝑥22 𝑥23 𝑥24 𝑥25 𝑥26SymbolismSearch-based Inference Engine

Artificial IntelligenceDensity EstimationLearning from Experience (Observations, Examples)If 𝑦 𝑦1 , then 𝑥 𝑥1If 𝑦 𝑦2 , then 𝑥 𝑥2𝑝 𝑥 𝑥𝑖 𝑦 𝑦𝑗 , 𝑝(𝑦 𝑦𝑗 )Inference(Reasoning) for a QuestionIf 𝑥 𝑥1 , then 𝑦 𝑦1If 𝑥 𝑥2 , then 𝑦 𝑦2𝑝 𝑦 𝑦𝑗 𝑥 𝑥𝑖 𝑝(𝑥 𝑥𝑖 𝑦 𝑦𝑗 )𝑝(𝑦 𝑦𝑗 )𝑝(𝑥),𝑝 𝑥 σ𝑖 𝑝 𝑥 𝑥𝑖 𝑦 𝑦𝑗 𝑝(𝑦 𝑦𝑗 )Bayesian Theory

Artificial IntelligenceDeep Neural Networks for Learning and Inference𝑜 𝑓 𝑊, 𝑥 , ex, 𝑜𝑗 𝑝 𝑦 𝑦𝑗 𝑥 𝑥𝑖Training (learning)Find 𝑊 to minimize the errors between 𝑜𝑗 and 𝑑𝑗for given training data { 𝑥𝑝 , 𝑙𝑝 }Inference(Reasoning)Calculate 𝑜𝑗 via the deep networkConnectionismNetwork TrainingInference (feedforward)

Learning and InferenceHightFeature SpaceWeight

Learning and InferenceA general tree structureHightroot node0internal(split) nodeFeature Space3Weight𝑦𝑦2 𝑥21 𝑥22 𝑥23 𝑥24 𝑥25 𝑥268425DBDecision Tree69 10 11 12 13 14terminal (leaf) node𝑥𝑦1 𝑥11 𝑥12 𝑥13 𝑥14 𝑥15 𝑥16Symbolism71

Learning and InferenceHightFeature SpaceWeightp ( x) Bayesian Theory 1 t 1exp (x μ) (x μ) 2 (2 )d / 2 1/ 21

Learning and InferenceHightNetwork TrainingInference (feedforward)Feature SpaceWeightgi (x) wti x wi 0 ,Connectionism

Convolutional Neural Networks𝑜 𝑓 𝑊, 𝑥

Supervised/Unsupervised Learning,HiddenObservedP ( i )UnsupervisedP (x 1 )P (x 2 )P (x 3 )Supervised

Generative/Discriminative Model Generating images𝑧1𝑧3𝑧2𝑥𝑝 𝑧1 , 𝑧2 , 𝑧3 𝑥Generative approach:Model 𝑝 𝑧, 𝑥 𝑝 𝑥 𝑧 𝑝(𝑧)Use Bayes’ theorem𝑝 𝑥 𝑧 𝑝(𝑧)𝑝 𝑧 𝑥 𝑝(𝑥)Discriminative approach:Model 𝑝 𝑧 𝑥 directly

Unsupervised Learning,Clustering: K-means, etc.Variational Auto-Encoder

Statistical Learning𝐿2 Loss𝐿 𝑑, 𝑓 𝑊, 𝑥 𝑑 𝑓 𝑊, 𝑥22Total Lossℒ 𝑊 𝑑 𝐿 , 𝑓 𝑊, 𝑥 𝑑𝑝(𝑥, 𝑑)where 𝑝(𝑥, 𝑑) is a joint PDF of 𝑥 and 𝑑, but unknownEmpirical Total Lossℒ𝑖 𝑊 1 𝑁σ𝐿𝑁 𝑛 1𝑑𝑛 , 𝑓 𝑊, 𝑥𝑛

Statistical Learning1𝑏𝑎𝑠𝑒 2 𝑏𝑖𝑡𝑠𝐼 𝑥𝑘 log( ) ቊ𝑏𝑎𝑠𝑒 𝑒 𝑛𝑎𝑡𝑠𝑝𝑘32 bits 𝑝𝑘 1/232 for uniform distribution 𝐼𝑘 32 bits① 𝐼 𝑥𝑘 0 for 𝑝𝑘 1② 𝐼 𝑥𝑘 0 for 0 𝑝𝑘 1③ 𝐼 𝑥𝑘 𝐼 𝑥𝑗 for 𝑝𝑘 𝑝𝑗Entropy : a measure of the average amount of informationconveyed per message, i.e., expectation of Information𝐻 𝑥 𝐸 𝐼 𝑥𝑘 𝑝𝑘 𝐼 𝑥𝑘 𝑝𝑘 log 𝑝𝑘𝑘𝑘

Statistical LearningEntropy becomes maximum when 𝑝𝑘 is equiprobable32 bits 𝑝𝑘 1/232 for uniform distribution 𝐼𝑘 32 bits232 11 0 𝐻(𝑋) log 32 32322𝑘 1 2 𝐻 𝑋 0 for an event 𝑝𝑘 1 and 𝑝𝑗 𝑘 0𝑜𝑘Cross Entropy Loss:ℒ W σ𝐾𝑘 𝑡𝑘 log 𝑓𝑘 𝑊, 𝑥𝑓𝑘 𝑊, 𝑥 𝑒 𝑎𝑘σ𝑗𝑎𝑒 𝑗1k j ℎ𝑗j1 K𝑎𝑘k wkj h jnet𝑤𝑘𝑗(softmax)𝑡𝑘 : target label (one hot: 0000100) 𝑎𝑗 j w ji xineti𝑤𝑗𝑖i𝑥𝑖 I

Statistical LearningCross Entropy Loss:𝐾ℒ W [𝑡𝑘 log 𝑓𝑘 𝑊, 𝑥 (1 𝑡𝑘 )log (1 𝑓𝑘 𝑊, 𝑥 )]𝑘𝑓𝑘 𝑊, 𝑥 1(sigmoid)1 𝑒 𝑎𝑘𝑡𝑘 : target label (multi-hot: 00110100)𝑜𝑘1 k j𝑤𝑘𝑗 ℎ𝑗j1 K𝑎𝑘k wkj h jnet 𝑎𝑗 j w ji xineti𝑤𝑗𝑖i𝑥𝑖 I

Statistical LearningTheorem (Gray 1990)𝑝𝑘 𝑝𝑘 log 0𝑞𝑘𝑘Relative entropy (or Kullback – Leibler divergence)𝐷𝐾𝐿 (𝑝 𝑞) 𝑝𝑘σ𝑘 𝑝𝑘 log𝑞𝑘𝐷𝐾𝐿 (𝑝 𝑞) 0 for 𝑝 𝑞𝑝𝑘 probability mass function𝑞𝑘 reference probability mass function

Scene and Object GenerationPose Transformer

Motion Retargeting

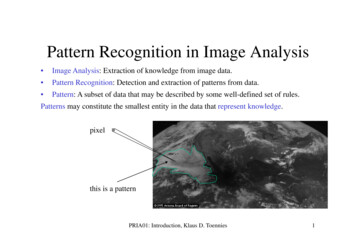

Outline of ML techniquesBayes RuleLikelihoodLearningML(P)EBayes. LPosterioriPrioriBayes DecisionGenerative ModelDiscriminative ModelGMLin. ClassifierLSSVMRandom ForestConvex O.HistogramK-NNEM, MCMCGMMK-SVMMCMC, VIBayesian NetDeep NNMLEBoltzm. MachineICAMCMC, VILatent DALinear DAMax. Separa.EMHMMPCAMax. ScatterVIParzen W.LearningEntropyK-SVDDSAConvex O.GABP(GD)NMK-L Divergence26

Course OutlineIntro. AI, ML, and DLIntro. Linear AlgebraIntro. Prob. & InformationBayesian Decision TheoryDim reduction PCA & LDALearning RulesSupport Vector MachineDeep Convolutional NetworksBayesian NetworksParametric pdf EstimationNon-Parametric pdf EstimationBoltzman MachineMarkov Chain Monte CarloInference of Bayesian Net, MCMCInference of Bayesian Net, VITraffic Pattern Analysis, VIRecent Papers- Active Learning- Imbalanced Data Learning- Out of Distribution- Weakly Supervised Learning- Etc.

Questions1. Describe the common things anddifferences among symbolism,connectionism, and Bayesian approach.2. Explain supervised/weekly-supervised/unsupervised learning.3. What is the difference betweendiscriminative and generative model?

Pattern Classification by Richard O. Duda, et al. . where ( , )is a joint PDF of and , but unknown Empirical Total Loss . Traffic Pattern Analysis, VI Recent Papers - Active Learning - Imbalanced Data Learning - Out of Distribution - Weakly Supervised Learning