Transcription

From UI Design Image to GUI Skeleton: A Neural MachineTranslator to Bootstrap Mobile GUI ImplementationChunyang Chen1 , Ting Su1 , Guozhu Meng1,3 , Zhenchang Xing2 , and Yang Liu11 Schoolof Computer Science and Engineering, Nanyang Technological University, SingaporeSchool of Computer Sciecne, Australian National University, Australia3 SKLOIS, Institute of Information Engineering, Chinese Academy of Sciences, .sg,zhenchang.xing@anu.edu.au,yangliu@ntu.edu.sg2 ResearchABSTRACT1A GUI skeleton is the starting point for implementing a UI designimage. To obtain a GUI skeleton from a UI design image, developershave to visually understand UI elements and their spatial layoutin the image, and then translate this understanding into properGUI components and their compositions. Automating this visualunderstanding and translation would be beneficial for bootstrapingmobile GUI implementation, but it is a challenging task due to thediversity of UI designs and the complexity of GUI skeletons to generate. Existing tools are rigid as they depend on heuristically-designedvisual understanding and GUI generation rules. In this paper, wepresent a neural machine translator that combines recent advancesin computer vision and machine translation for translating a UIdesign image into a GUI skeleton. Our translator learns to extractvisual features in UI images, encode these features’ spatial layouts,and generate GUI skeletons in a unified neural network framework, without requiring manual rule development. For training ourtranslator, we develop an automated GUI exploration method toautomatically collect large-scale UI data from real-world applications. We carry out extensive experiments to evaluate the accuracy,generality and usefulness of our approach.Mobile applications (apps) are event-centric programs with richGraphical User Interfaces (GUIs). An app’s GUI should not onlyprovide a working interface for user interactions, but also create anintuitive and pleasant user experience. In fact, the later is crucial foran app’s success in the highly competitive market [46, 56]. Developing the GUI of an app routinely involves two separate but relatedactivities: design a UI and implement a UI. Designing a UI requiresproper user interaction, information architecture and visual effectsof the UI, while implementing a UI focuses on making the UI workwith proper layouts and widgets of a GUI framework. A UI designcan be created from scratch or adapted from UI design kits [1] orexisting apps’ GUIs, and it is usually conveyed to developers in theform of design images to implement.A UI design image depicts the desired UI elements and theirspatial layout in a matrix of pixels. To implement a UI design imageusing a GUI framework, developers must be able to translate thepixel-based depiction of the UI (or parts of the UI) into a GUI skeleton. As illustrated in Figure 1, a GUI skeleton defines what and howthe components of a GUI builder (e.g., Android layouts and widgets)should be composed in the GUI implementation for reproducingthe UI elements and their spatial layout in the UI design image.This GUI skeleton is like the initial “bootstrap instructions” whichenables the subsequent GUI implementation (e.g., setting up font,color, padding, background image, and etc.)However, there is a conceptual gap between a UI design image(i.e., a UI design in a pixel language) and the GUI skeleton (i.e., theUI design in a language of GUI framework component names). Tobridge this gap, developers need to have a good knowledge of aGUI framework’s components and what visual effects, interactionsand compositions these components support in order to createan appropriate GUI skeleton for different kinds of UI elementsand spatial layouts. If developers do not have this knowledge, theGUI implementation will become stucked, because modern GUIimplementation cannot be achieved by hardcode-positioning sometexts, images and controls. This is especially the case for mobileapps that have to run on a wide range of screen sizes.To overcome the knowledge barrier between UI design imageand GUI skeleton, developers may attempt to figure out what andhow the GUI components should be composed for a UI design imagethrough a trial-and-error approach. Although modern GUI buildersprovide strong interactive support (e.g., drag & drop, what-you-seeis-what-you-get) for creating a GUI implementation, this type oftrail-and-error attempt would be very cumbersome and frustrating.First, a mobile app’s GUI often involves many GUI componentsand complex spatial layout (see Figure 6(b)). Second, a complexCCS CONCEPTS Software and its engineering; Human-centered computing Graphical user interfaces;KEYWORDSUser interface, reverse engineering, deep learningACM Reference Format:Chunyang Chen, Ting Su, Guozhu Meng, Zhenchang Xing, and Yang Liu.2018. From UI Design Image to GUI Skeleton: A Neural Machine Translatorto Bootstrap Mobile GUI Implementation. In Proceedings of ICSE ’18: 40thInternational Conference on Software Engineering , Gothenburg, Sweden, May27-June 3, 2018 (ICSE ’18), 12 pages.https://doi.org/10.1145/3180155.3180222 Correspondingauthors.Permission to make digital or hard copies of all or part of this work for personal orclassroom use is granted without fee provided that copies are not made or distributedfor profit or commercial advantage and that copies bear this notice and the full citationon the first page. Copyrights for components of this work owned by others than ACMmust be honored. Abstracting with credit is permitted. To copy otherwise, or republish,to post on servers or to redistribute to lists, requires prior specific permission and/or afee. Request permissions from permissions@acm.org.ICSE ’18, May 27-June 3, 2018, Gothenburg, Sweden 2018 Association for Computing Machinery.ACM ISBN 978-1-4503-5638-1/18/05. . . UCTION

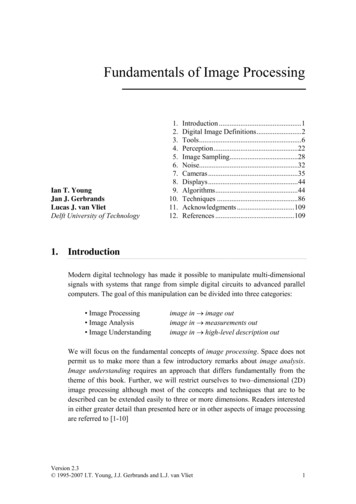

ICSE ’18, May 27-June 3, 2018, Gothenburg, ayoutFigure 1: Translating a design image into a Android GUI skeleton (not all)GUI framework supports dozens of layouts and widgets (some maybe interchangeable) and flexible composition of these layouts andwidgets. Developers can easily get lost during the trial-and-errorof an unfamiliar GUI framework.Alternatively, developers can learn from GUI framework tutorials or existing GUI implementations. To that end, they must beable to find some tutorials or GUI implementations that implementthe UI designs that are similar to the desired UI. Finding such tutorials or GUI implementations through the UI design image is achallenging image search task. It is also difficult to formulate aconcise, accurate text query of the UI design and the needed GUIcomponents for using information retrieval (IR) methods. Developers can also seek solutions for implementing a UI design fromthe developer community (e.g., Stack Overflow), but they may notalways be able to obtain useful advices in time.The UIs of apps can be very sophisticated to support complextasks, and they may undergo many revisions during the apps’ lifespan. Considering millions of apps being developed and maintained,automating the translation from UI design to GUI implementationwould be beneficial for mobile app development. Some tools [30, 45]can automatically generate the GUI implementation given a UI design image. This automatic, generative approach overcomes thelimitations of the trial-and-error, search-based or ask-developercommunity approaches for transforming UI design image into GUIskeleton. However, existing tools are rigid because they dependon hand-designed visual understanding and GUI generation templates which incorporate only limited UI-image-to-GUI-skeletontranslation knowledge.In this work, we present a deep learning architecture that distillsthe crowd-scale knowledge of UI designs and GUI implementationsfrom existing apps and develop a generative tool to automaticallygenerate the GUI skeleton given an input UI design image. Ourgenerative tool can be thought of as an “expert” who knows a vastvariety of UI designs and GUI skeletons to advise developers whatand how the components of a GUI framework should be composedfor implementing a UI design image.To build this “expert”, we must tackle two fundamental challenges. First, to be a knowledgeable expert, the generative toolmust be exposed to a knowledge source of a vast variety of UIdesigns and GUI skeletons from a large number of apps. Second, toadvise developers how to translate a UI design into a GUI skeleton,the generative tool must capture not only the UI elements contained in a UI design image, but it also must express how theseUI elements relate to each other in terms of a composition of theC. Chen, T. Su, G. Meng, Z. Xing, Y. LiuGUI components. In this paper, we present an automated GUI exploration technique for tackling the first challenge in knowledgesource, and develop a neural machine translator that combinesrecent advances in computer vision and machine translation fortackling the second challenge in visual understanding and skeletongeneration. The neural machine translator is end-to-end trainableusing a large dataset of diverse UI screenshots and runtime GUIskeletons that are automatically collected during the automatedGUI exploration of mobile app binaries.We implement an Android UI data collector [52, 53] and use it toautomatically collect 185,277 pairs of UI images and GUI skeletonsfrom 5043 Android apps. We adopt this dataset to train our neuralmachine translator and conduct unprecedented large-scale evaluation of the accuracy of our translator for UI-image-to-GUI-skeletongeneration. Our evaluation shows that our translator can reliablydistinguish different types of visual elements and spatial layoutsin very diverse UI images and accurately generate the right GUIcomponents and compositions for a wide range of GUI skeletoncomplexity. We also apply our translator to the UIs of 20 Androidapps that are not in our training set, and this study further confirmsthe generality of our translator. Through a pilot user study, weprovide the initial evidence of the usefulness of our approach forbootstraping GUI implementations.Our contributions in this work are as follows: We develop a deep-learning based generative tool for overcoming the knowledge barrier for translating UI images toGUI skeletons. Our generative tool combines CNN and RNN models forlearning a crowd-scale knowledge of UI images and GUIskeletons from a large number of mobile apps. We develop an automated GUI exploration framework toautomatically build a large dataset of UI images and GUIskeletons for training the deep learning models. We show our tool’s robust visual understanding and GUIskeleton generation capability through large-scale experiments, and provide initial evidence of our tool’s usefulnessby a pilot user study.2PROBLEM FORMULATIONWe formulate the UI-image-to-GUI-skeleton generation as a machine translation task. The input i to the machine translator is a UIdesign image (can be regarded as a UI design in a pixel language,e.g., RGB color or grayscale pixels). As shown in Figure 2, the machine translator should be able to “translate” the input UI designimage into a GUI skeleton, i.e., a composition of some containercomponents (i.e., the non-leaf nodes) and atomic components (i.e.,the leaf nodes) of a GUI framework.A GUI skeleton can be regarded as the UI design in a GUI framework language whose vocabulary consists of the component namesof the GUI framework, such as Android’s RelativeLayout, TextView,ImageButton, and two special tokens (e.g., brackets “{” and “}”) expressing the composition of GUI components. As shown in Figure 2,a component hierarchy can be represented as an equivalent tokensequence via depth-first traversal (DFT) and using “{” and “}” toenclose a container’s contained components in the token sequence.In this work, we use the token sequence representation of the GUIskeleton as the output of the machine translator.

From UI Design Image to GUI Skeleton: A Neural MachineTranslator to Bootstrap Mobile GUI ImplementationICSE ’18, May 27-June 3, 2018, Gothenburg, ditTextButtonImageButtonRelativeLayout{ View{ ImageButton TextView} { LinearLayout { TextViewTextView EditText EditText LinearLayout { EditText ImageButton } Button }Figure 2: An example of UI-image-to-GUI-skeleton generationNEURAL MACHINE TRANSLATORDFS3DFSUnlike normal machine translation tasks where both source andtarget languages are text data, our machine translation task requiresjoint processing of image and text data. Furthermore, unlike textwhich is a sequence of words, our input UI design image containsthe spatial layout information of UI elements, and our output GUIskeleton is a hierarchical composition of GUI components. Takinginto account these characteristics of our task, we design a neuralmachine translator which integrates a vision Convolutional Neural Network (CNN) [35, 57], a Recurrent Neural Network (RNN)encoder and a RNN decoder [15, 60] in a unified framework. Asshown in Figure 3, given an input UI image, the vision CNN extractsa diverse set of image features through a sequence of convolutionand pooling operations. The RNN encoder then encodes the spatiallayout information of these image features to a summary vectorC, which is then used by the RNN decoder to generate the GUIskeleton in token sequence representation.3.1A convolutional layer performs convolution operations usingfilters (or kernels). A filter is a neuron that learns to look for somevisual features (e.g., various oriented edges) in the input. The filtersin a layer will only be connected to the local regions of the inputvolume. The spatial extent of this local connectivity is called thereceptive field

using a large dataset of diverse UI screenshots and runtime GUI skeletons that are automatically collected during the automated GUI exploration of mobile app binaries. We implement an Android UI data collector [52, 53] and use it to automatically collect 185,277 pairs of UI images and GUI skeletons from 5043 Android apps. We adopt this dataset to train our neural