Transcription

Efficient Featurized Image Pyramid Network for Single Shot DetectorYanwei Pang1 , Tiancai Wang1 , Rao Muhammad Anwer2 , Fahad Shahbaz Khan2,3 , Ling Shao21School of Electrical and Information Engineering, Tianjin University2Inception Institute of Artificial Intelligence, UAE3Computer Vision Laboratory, Department of Electrical Engineering, Linköping University, e object detectors have recently gained popularity due to their combined advantage of high detectionaccuracy and real-time speed. However, while promisingresults have been achieved by these detectors on standardsized objects, their performance on small objects is far fromsatisfactory. To detect very small/large objects, classicalpyramid representation can be exploited, where an imagepyramid is used to build a feature pyramid (featurized imagepyramid), enabling detection across a range of scales. Existing single-stage detectors avoid such a featurized imagepyramid representation due to its memory and time complexity. In this paper, we introduce a light-weight architecture to efficiently produce featurized image pyramid in asingle-stage detection framework. The resulting multi-scalefeatures are then injected into the prediction layers of thedetector using an attention module. The performance of ourdetector is validated on two benchmarks: PASCAL VOCand MS COCO. For a 300 300 input, our detector operates at 111 frames per second (FPS) on a Titan X GPU,providing state-of-the-art detection accuracy on PASCALVOC 2007 testset. On the MS COCO testset, our detector achieves state-of-the-art results surpassing all existingsingle-stage methods in the case of single-scale inference.1. IntroductionGeneric object detection is one of the fundamental problems in computer vision, with numerous real-world applications in robotics, autonomous driving and video surveillance. Recent advances in generic object detection havebeen largely attributed to the successful deployment ofconvolutional neural networks (CNNs) in detection frameworks. Generally, deep object detection approaches can Equalcontributionbe roughly divided into two categories: two-stage [13, 14,16, 29] and single-stage detectors [19, 28, 5]. In two-stageapproaches, object proposals are first generated and laterclassified and regressed. Single-stage approaches, on theother hand, directly regress the default anchors into detection boxes by sampling grids on the input image. Singlestage object detectors are generally computationally efficient but inferior in detection accuracy compared to theirtwo-stage counterparts [18].Among single-stage methods, the Single Shot MultiboxDetector (SSD) [28] has recently been shown to provide anoptimal tradeoff between speed and detection accuracy. Thestandard SSD utilizes a VGG-16 architecture as the basenetwork and adds further convolutional (conv) feature layers to the end of the truncated base network. In SSD, independent predictions are made by layers of varying resolutions, where shallow or former layers contribute to predicting small objects while deep or later layers are devoted todetecting large objects. Despite its success, SSD strugglesto handle large scale variations across object instances. Inparticular, the detection performance of SSD on small objects is far from satisfactory [18], which is likely due to thelimited information in shallow or former layers.Multiple solutions have been proposed in the literature toalleviate the issues stemming from scale variations. Featurepyramid is an essential component in many recognition systems, forming the basic ground for a standard solution [1].Building feature pyramids from image pyramids (featurizedimage pyramids) has long been pursued and employed inmany classical hand-crafted methods [11, 9]. Modern deepobject detectors also typically employ some forms of pyramid representation, even though the CNNs used in theseapproaches are robust to scale variation.For two-stage methods, earlier works [29, 13] advocatedthe use of single scale features (see Fig. 1(c)). In contrast,recent two-stage methods [24] have investigated featurepyramid to obtain more accurate detection (see Fig. 1(b)).17336

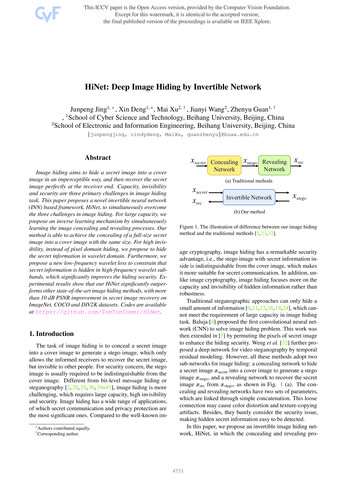

(a) Featurized image pyramid(d) Feature pyramid hierarchyImageStandard feature map(b) Feature pyramid(c) Singe scale feature(e) OursLight-weight feature mapPrediction feature mapFigure 1. Comparison of our approach with different architecturesfor multi-scale object detection. (a) Image pyramid for buildingfeature pyramid where features are constructed from images ofvarious scales independently. (b) Feature pyramid network employed in [24] combining features in a layer by layer top-down fusion scheme. (c) Single scale features for faster detection utilizedin Fast and Faster R-CNN [13, 29]. (d) Pyramidal feature hierarchy employed in standard SSD where feature pyramid is constructed by a CNN [28]. (e) Our architecture is accurate like (a)but efficient due to the proposed light-weight convolutional block(Sec. 3.2) and integrated with (d).Here, the objective is to leverage high-level semantics byup-sampling low-resolution feature maps and fusing themwith high-resolution feature maps. However, such an approach is still sub-optimal for very small and large sized objects [32]. For very small objects, even a large up-samplingfactor cannot match the typical resolution (224 224) ofpre-trained networks. Consequently, the high-level semantic features generated by the feature pyramid network willstill not be adequate for very small object detection and viceversa. Further, such an approach is computationally expensive due to the layer-by-layer fusion of many layers.In the case of single-stage methods, SSD exploits multiple CNN layers in a pyramidal feature hierarchy, producingfeature maps of varying spatial resolutions (see Fig. 1(d)).However, trading spatial resolution at the cost of high-levelsemantic information can affect the performance. In thiswork, we aim to improve the accuracy of SSD without sacrificing its hallmark speed. We re-visit the classical imagepyramid approach (see Fig. 1(a)), where feature maps ofvarying scales are generated by applying a CNN on eachof the image scales separately, in a single-stage detectionframework. However, the standard image pyramid basedfeature representation (featurized image pyramids) is slowsince each image scale is passed through a deep CNN to extract scale-specific feature maps, thereby making its usageimpractical for a high-speed SSD.Contributions: We introduce a light-weight featurizedimage pyramid network (LFIP) to produce a multi-scalefeature representation. Within the LFIP network (seeFig. 1(e)), an input image is first iteratively downsampled toconstruct an image pyramid hierarchy, which is then fed toa shallow convolutional block, resulting in a feature pyramid where each level of an image pyramid is featurized.Multi-scale features from the feature pyramid are then combined with the standard SSD features, in an attention module, in order to boost the discriminative power. Furthermore, we introduce a forward fusion module to integratefeatures from both the former and current layers.We perform extensive experiments on two benchmarks:PASCAL VOC and MS COCO. Our detector provides superior results on both datasets compared to existing singlestage methods. Further, our approach provides significantlyimproved results on small objects achieving an absolutegain of 7.4% in average precision (AP) on MS COCO smallset, compared with the baseline SSD.2. Baseline Detector: SSDWe base our approach on the SSD [28] which employsa VGG-16 architecture as the backbone network. Given aninput image I of size 300 300, the SSD uses conv4 3 layerwith feature size 38 38 and F C 7 (converted into a convlayer) with feature size 19 19 from the original VGG-16architecture. It truncates the last fully connected layer ofthe VGG-16 network and further adds a series of progressively smaller conv layers: conv8 2, conv9 2, conv10 2and conv11 2, with feature size 10 10, 5 5, 3 3 and1 1, respectively, at the end for detection. The detector adopts a pyramidal hierarchical structure where shallowlayers (i.e. conv4 3) predict small object instances and deeplayers (i.e. conv8 2) detect large object instances. In thisway, each of the aforementioned layers are used for scorepredictions and offsets, over a predefined set of boundingboxes. The score predictions are performed by 3 3 N filter dimensions, where N is the number of channels. Consequently, non-maximum suppression (NMS) is applied toobtain final detection scores. We refer to [28] for details.As mentioned above, the standard SSD localizes objectsin a pyramidal hierarchy by exploiting multiple CNN layers, with each layer designated to detect objects of a specific scale. This implies that small object instances are detected using former layers with small receptive fields, whiledeep layers with large receptive fields are used to localizelarge object instances. However, the SSD struggles to accurately detect small object instances due to limited information in shallow layers, compared to deep layers [18]. Fu etal. [12] proposed to use deconvolution layers to introducelarge-scale context and a better feature extraction network(ResNet) to improve the accuracy. Cao et al. [4] also investigated the problem of small object detection and introduced contextual information to the SSD. However, theseapproaches improve SSD at the cost of reduction in speed.Further, the additional contextual information may introduce unnecessary background noise, resulting in a deterioration of accuracy in some cases. Zhang et al. [34] extended7337

SfFeature Attention ModuleForward Fusion ModuleC9Light-weight feature High-level feature(b) Feature Attention ModuleLight-weight Convolutional BlockReLUC8BNFc7 BNC4fBNS3x3 convf3x3 convSReLUfBNSDetection Conv LayerSInput imageLow-level featureStandard SSD feature(c) Forward Fusion Module1x1 5121x1 10241x1 5123x3 1283x3 2563x3 1283x3 641x1 1281x1 2561x1 1281x1 643x3 1283x3 2563x3 1283x3 641x1 256DownsamplingLight-weight featurizedimage pyramid networkIterative DownsamplingInput image(a) Overall architecture of our detector(d) Light-weight featurized image pyramid network (LFIP)Figure 2. (a) Overall architecture of our single-stage object detector. Our approach extends the SSD with a light-weight featurized imagepyramid network (LFIP) whose architecture is shown in (d). Within the LFIP network, an input image is first iteratively downsampledto construct an image pyramid hierarchy. The image pyramid hierarchy is then input to a shallow convolutional block which producesa feature pyramid by featurizing each level of the image pyramid. The resulting feature pyramid is then injected into the standard SSDprediction layers using an attention module shown in (b). We also introduce a forward fusion module to integrate the modulated featuresfrom both the former and current layers, shown in (c).the standard SSD by integrating a semantic segmentationbranch. Instead, we re-visit the classic approach of buildingfeature pyramid from image pyramid without sacrificing thehallmark speed of the SSD.Conv Block PoolingImageConv Block PoolingX8 features(a) Standard Feature ExtractionImage DownsamplingShallow Conv BlockX8 features(b) Proposed Light-weight Feature Extraction3. MethodHere, we first describe the overall architecture of our approach and introduce an alternative feature extraction strategy, utilized in our light-weight featurized image pyramidnetwork module. Afterwards, we introduce feature attention and forward fusion modules. The overall architectureof our detector, named LFIP-SSD, is illustrated in Fig. 2(a).LFIP-SSD comprises of two main parts: the standard SSDnetwork and the proposed light-weight featurized imagepyramid network (LFIP) to produce a feature pyramid representation. As in [28], we employ VGG-16 as the backbone and add a series of progressively smaller conv layers.Different to the standard SSD, LFIP contains an iterativedownsampling and a light-weight convolutional block. Features from the LFIP are then injected into the standard SSDlayers using an attention module. The resulting features ofthe current layer are then fused with their former layer counterpart in a forward fusion module.3.1. Feature Extraction StrategyConventional object detection frameworks typically extract features either from a VGG-16 or ResNet-50, in a repeated stack of convolutional blocks and max-pooling operations (see Fig. 3(a)). Though the resulting features areFigure 3. Comparison of our feature extraction strategy, employedin the LFIP network, with its standard SSD counterpart. (a) Standard SSD feature extraction: convolution block together with repeated stride and max-pooling operations to generate features.Here, ”X8” shows that downsmapling is performed with a strideof 8. (b) proposed Feature Extraction in LFIP: the input image isfirst downsampled to the target size and then a shallow convolutionblock is used to extract features.semantically strong, they tend to lose discriminative information that likely contributes towards accurate object classification. As an alternative, we introduce an efficient feature extraction strategy (see Fig. 3(b)). In our strategy, aninput image is first downsampled, either by interpolationor a pooling operation, to the desired target size of differentSSD prediction layers. These downsampled images are thenpassed through a shallow convolutional block. Comparedto the deep CNNs in the traditional image pyramid network,our shallow convolutional block provides the computationalefficiency required for high-speed detection, while enhancing discriminative information for multi-scale detection.3.2. Light-weight Featurized Image PyramidAs discussed earlier, standard featurized image pyramidsare inefficient since each image scale is passed through a7338

deep CNN to extract scale-specific feature maps. Highspeed single-stage detectors therefore tend to avoid sucha featurized image pyramid representation. Here, we propose a simple, yet effective solution to efficiently constructa light-weight featurized image pyramid (LFIP) representation. As shown in Fig. 2(d), the LFIP network comprises ofan iterative downsampling part and a light-weight convolutional block. Given an input image I, an image pyramid Ipis first constructed through iterative downsapling:Ip {i1 , i2 , . . . , in }(1)where n denotes the number of image pyramid levels.Image scales in the pyramid are selected to match the sizesof standard SSD prediction layer maps, such as conv4 3.Afterwards, each of the image scales is passed througha shallow convolutional block to generate the multi-scalelight-weight feature maps:Sp {s1 , s2 , . . . , sn }(2)where s1 denotes the light-weight features for theconv4 3 layer of standard SSD network and sn representsthe last features generated for the conv9 2 layer of the SSDnetwork. The shallow convolutional block includes one3 3 convolution layer and a bottleneck block, as in [17],but without the identity shortcut. The identity shortcut hasbeen skipped due to the shallow nature of our convolutional block. The conv layers in our shallow convolutionalblock vary in the number of channels to match the resultinglight-weight featurized image pyramids with that of standard SSD feature maps.3.3. Feature Attention ModuleHere, we describe how the light-weight featurized image pyramid, generated from our LFIP network, is injectedinto the standard SSD prediction layers. We introduce afeature attention module (FAM), as shown in Fig. 2(b).First, both the light-weight featurized image pyramid andstandard SSD feature maps are passed through a BatchNorm (BN) layer for normalization. We consider different ways to fuse the normalized feature set: concatenation,element-wise sum and element-wise product. We foundthat element-wise product provides the best performance.Consequently, we employ a ReLU activation and a 3 3conv layer to generate modulated features. For an input image I, standard SSD features fk from the k th SSD prediction layer are combined with the corresponding light-weightLFIP features sk as:mk ϕk (β(fk ) β(sk ))(3)where mk are the modulated features after fusion, ϕk (.)denotes the operation including the serial ReLU and 3 3conv layer, and β(.) denotes the BN operation. As shown(a) Input image(b) SSD feature(c) Modulated featureFigure 4. Comparison of feature maps obtained from the conv4 3layer in standard SSD and our modulated features after featureattention module.in Fig. 4, our modulated features enhance the discriminativepower of standard SSD features.3.4. Forward Fusion ModuleTo further enhance the spatial information, we introduce a simple forward fusion module (FFM) to integratemodulated features from both the former and current layers (Fig. 2(c)). We employ the FFM module for layersfrom F C 7 to conv9 2. Within FFM, each previous layeris first pass through a 3 3 conv layer to achieve the samesize as the current layer. Afterwards, former mk 1 and current mk modulated features are passed through BatchNorm(BN) and combined using an element-wise sum operation.This is followed by a ReLU operation to produce the finalprediction dk as:dk γ(φk (mk 1 ) β(mk ))(4)where φk (.) denotes the operation including the serial3x3 conv and BN layers, β(.) is the BN operation, and γ isthe ReLU activation.4. ExperimentsWe validate our approach by conducting experiments ontwo datasets: PASCAL VOC and MS COCO. We first introduce the two datasets and then discuss the implementationdetails of our proposed detector. We compare our detectorwith state-of-the-art object detection methods from the literature and also provide a comprehensive ablation study onthe PASCAL VOC 2007 dataset.7339

4.1. DatasetsPASCAL VOC [10]: The PASCAL VOC dataset consistsof 20 different object categories. For this dataset, the training is performed on a union of the VOC 2007 trainval setwith 5k images and the VOC 2012 trainval set with 11k images. Evaluation is carried out on the PASCAL VOC 2007test with 5k images. Object detection accuracy is measuredin terms of mean Average Precision mAP.MS COCO [26]: The MS COCO dataset consists of 160kimages with 80 object categories. The dataset contains80k training, 40k validation and 40k test-dev images. ForMS COCO, training is performed on 120k images from thetrainval set and evaluation is carried out on the test-dev set.We follow the standard MS COCO protocol for evaluation,where the overall performance, average precision AP, ismeasured by averaging over multiple IOU thresholds, ranging from 0.5 to 0.95.MethodsSSD [28]R-SSD [20]RUN [23]ESSD [35]DSSD [12]DES [34]WeaveNet [6]RefineDet VGG-16VGG-16VGG-16VGG-16input size300 300300 300300 300300 300321 321300 300320 320320 320300 40259.576.85040.3111Table 1. Speed and performance comparisons of our method withexisting single-stage detectors on PASCAL VOC 2007 test set. Forall detectors, the input image size is approximately 300x300.For a fair comparison, the speed for all detectors is measured on asingle Titan X GPU (Maxwell architecture). The best two resultsare shown in red and blue. Our detector improves the detectionaccuracy by 3.2% in mAP over the baseline SSD. Further, our detector provides an optimal trade-off between detection accuracyand speed compared to existing single-stage detectors.4.2. Implementation DetailsAll experiments are conducted using VGG-16 [31], pretrained on ImageNet [30], as the backbone. Our full training and testing code is built on Pytorch and will be publiclyavailable. We follow the similar settings as the baselineSSD [28] for model initialization and optimization. Thewarm-up strategy is adopted for the first six epochs. Thelearning rate is first set to 2 10 3 and gradually decreasesto 10 4 and 10 5 at 150 and 200 epochs, respectively forthe PASCAL VOC dataset. In the case of MS COCO, thesame learning rate values (used in the PASCAL VOC) decreases at 90 and 120 epochs. Following [28], we use thesame loss function, scales and aspect ratios of the defaultsboxes and the data augmentation method. We set the weightdecay to 0.0005 and the momentum to 0.9. The batch-sizeis set to 32 for both datasets. A total number of 250 and160 epochs are performed for the PASCAL VOC and MSCOCO datasets, respectively. The FLOPs of VGG backbone and LFIP are 1.6G and 0.9G, respectively. The FLOPsof LFIP mainly come from convolution operations followedby element-wise multiplication and addition.4.3. Pascal VOC 2007We first compare our detector with the baseline SSD andother existing single-stage detectors. For a fair comparison,we use the same settings for both our detector and the baseline SSD. Tab. 1 shows the comparison, in terms of speedand detection accuracy, of our detector with both the baseline SSD and several other single-stage detection methods.The baseline SSD achieves a detection mAP score of 77.2while running at 120 FPS. Among existing single-stage detectors, RefineDet [33] and DES [34] provide detection accuracies of 80.0 and 79.7 mAP while running at 40 and 77FPS, respectively. Our detector provides an optimal tradeoff between detection accuracy and speed by providing adetection accuracy of 80.4 mAP while running at 111 FPS.State-of-the-art Comparison: Here, we compare our detector with state-of-the-art single and two-stage detectionmethods. Tab. 2 shows a per-class comparison for varying input image sizes. Generally, two-stage object detectionmethods [29, 7] take a large image as input ( 1000 600)compared to their single-stage counterparts. Among twostage object detectors, CoupleNet [36] with multi-scale testing provides improved performance with 82.7 mAP. Amongsingle-stage methods, RefineDet [33] achieves a detectionaccuracy of 81.8 when using an input image of size ( 512 512). With the same input image size, our detector achieves similar detection accuracy while providing a2.7-fold speedup compared to RefineDet [33].Runtime Analysis: Fig. 5 shows the accuracy vs speedcomparison of our detector with state-of-the-art single andtwo-stage methods, on the VOC 2007 test set. All detectionspeeds are measured on a single Titan X GPU (Maxwell architecture). Our detector processes an image at 111 FPSwhereas the baseline SSD runs at 120 FPS. Among existingmethods, the two-stage CoupleNet [36] provides superiordetection results with a speed of 8 FPS. Our detector provides a 13-fold speedup compared to CoupleNet [36].4.4. Ablation Study on PASCAL VOC 2007We conduct an ablation study to validate the effectiveness of different modules proposed in our detector. We analyze the impact on detection performance of different downsampling strategies, various convolutional block depths andthe light-weight multi-scale features.Downsampling Strategies: We investigate three commonly used downsampling strategies to construct the imagepyramid: bilinear interpolation, max pooling and averagepooling. Tab. 3 (left) shows the comparison when using7340

MethodsTwo-Stage Detector:Faster-RCNN [29]Faster-RCNN [17]ION [2]HyperNet [22]R-FCN [7]CoupleNet MS [36]Single-Stage Detector:SSD [28]RON [21]DSSD [12]RefineDet [33]OursSSD [28]DES [34]DSSD [12]RefineDet [33]OursBackboneinput sizemAP speed aero bike bird boat bottle bus car cat chair cow table dog horse mbike person plant sheep sofa train 1000 6001000 6001000 6001000 6001000 6001000 -16VGG-16VGG-16VGG-16ResNet-101VGG-16VGG-16300 300384 384321 321320 320300 300512 512512 512513 513512 512512 379.479.479.180.080.583.782.382.9Table 2. Per-class state-of-the-art comparison on the PASCAL VOC 2007 dataset. All detection methods are trained on the union ofVOC2007 and VOC2012 trainval and tested on VOC2007 test. When comparing with single-stage detectors, our number is marked in redand blue if it is the best two in the column. Our two detection methods have exactly the same settings except having different input sizes(300 300 and 512 512). Our detector achieves promising results and provides a good trade-off between detection accuracy and speed,compared to state-of-the-art approaches in literature.MethodsBilinear interpolationAverage poolingMax poolingCoupleNetmAP VOC2007 OLOv2SSDIONRON7470FRCN04080Frames per second (fps)mAP79.879.980.0MethodsProgressive decrementConstant depthProgressive incrementmAP79.680.080.2Table 3. Analyzing the impact of different downsampling strategies when constructing the image pyramid (left). Here, we consider bilinear interpolation, average pooling and max poolingdownsampling strategies. We also analyze the impact of networkdepth on the shallow convolutional block (right).Add-onconv 4 3conv 7conv 8 2conv 9 2with e 5. Accuracy vs speed comparison on the PASCAL VOC2007 test set. For fair comparison, all detectors are trained on theVOC 2007 2012 trainval and the speed is measured on a singleTitan X GPU. For two-stage detectors, an input image size of 1000 600 is used. All single-stage detectors use an input imagesize of 300 300 except YOLOv2 (544 544). Our detectorachieves a 9-fold speedup compared to the two-stage CoupleNet.Table 4. Ablation results on PASCAL VOC 2007 dataset withmulti-scale Light-Weight Feature fusion at convolutional featuresat different stages of SSD model.different downsampling strategies. The results show thatchanging the downsampling strategies has negligible effecton the overall detection results, though max pooling provides the best performance of 80.0 mAP.Shallow Convolutional Block Depth: Here, we analyzedifferent depths of the shallow convolutional block. Weconsider three different strategies: constant depth, progressive incrementation and progressive decrementation. Forconstant depth, same number of convolution layers are usedfor different levels in the shallow convolutional block. Inprogressive incrementation, we progressively increase thedepth of the shallow convolutional block for the corresponding deeper prediction layers. In progressive decrementation,we progressively decrease the depth of the shallow convolutional block for the corresponding deeper prediction layers. Tab. 3 (right) shows the impact of using different depthstrategies for the shallow convolutional block. The progressive incrementation provides the best results.Impact of LFIP on SSD Prediction Layers: Here, we analyze the impact of our LFIP representation on the standardSSD. We perform an experiment by systematically injecting the LFIP representation at different stages of the stan734177.2X80.4

MethodsTwo-Stage Detector:Faster [29]Faster-FPN [24]R-FCN [7]Deformable R-FCN [8]Mask-RCNN [15]Cascade R-CNN [3]Single-Stage Detector:SSD [28]DSSD [12]RefineDet [33]RFBNet [27]OursSSD [28]DSSD [12]RefineDet [33]RetinaNet [25]RFBNet [27]OursBackboneInput NResNet-101ResNet-101ResNeXt-101-FPNResNet-101-FPN 1000 600 1000 600 1000 600 1000 600 1280 800 1280 et-101VGG-16ResNet-101-FPNVGG-16VGG-16300 300321 321320 320300 300300 300512 512513 513512 512 832 500512 512512 47.644.445.946.343.551.144.349.147.647.1Table 5. State-of-the-art comparison on MS COCO test-dev set. When using 300 300 and 512 512 input image sizes, our detectorimproves the overall detection performance by 4.9% and 5.8% in AP, respectively, compared to the baseline SSD.Figure 6. Qualitative results of our detector on the PASCAL VOC 2007 test set (corresponding to 81.8 mAP). The model was trained onall the train and validation datasets in VOC 2007 and VOC 2012. Each color is related to an object category.dard SSD. Tab. 4 shows the detection results when injectingthe LFIP representation at different stages of the standardSSD. A large gain (2.2%) in mAP is achieved when integrating

pyramid network (LFIP) whose architecture is shown in (d). Within the LFIP network, an input image is first iteratively downsampled to construct an image pyramid hierarchy. The image pyramid hierarchy is then input to a shallow convolutional block which produces a feature pyramid by featurizing each level of the image pyramid.