Transcription

Domain AdaptationJohn Blitzer and Hal Daumé III

Classical “Single-domain” LearningPredict:Running with ScissorsTitle: Horrible book, horrible.This book was horrible. I read half, suffering from a headache the entire time, and eventually ilit it on fire. 1 less copy in the world. Don't waste your money. I wish i had the time spentreading this book back. It wasted my lifeSothe topic ofahthe talk today is online learning

Domain AdaptationSourceTrainingSoTestingthe topic ofahthe talk today is online learningTargetEverything is happening online. Even theslides are producedon-line

Domain AdaptationNatural Language ProcessingPacked with fascinating infoA breeze to clean upVisual Object Recognition

Domain AdaptationNatural Language ProcessingPacked with fascinating infoA breeze to clean upVisual Object Recognition

Tutorial Outline1. Domain Adaptation: Common Concepts2. Semi-supervised Adaptation Learning with Shared SupportLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

Classical vs Adaptation ErrorClassical Test Error:Measured on thesame distribution!Adaptation Target Error:Measured on anew distribution!

Common Concepts in AdaptationCovariate Shiftunderstands both&understands both&Single Good HypothesisDomain Discrepancy and ErrorEasyHard

Tutorial Outline1. Notation and Common Concepts2. Semi-supervised Adaptation Covariate shift with Shared SupportLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

A bound on the adaptation errorMinimize the total variation

Covariate Shift with Shared SupportAssumption: Target & Source Share SupportReweight source instances to minimize discrepancy

Source Instance ReweightingDefining ErrorUsing Definition of ExpectationMultiplying by 1Rearranging

Sample Selection BiasAnother Way to View1) Draw from the target

Sample Selection BiasRedefine the source distribution1) Draw from the target2) Select into the source with

Rewriting Source RiskRearrangingnot dependent on x

Logistic Model of Source SelectionTraining Data

Selection Bias Correction AlgorithmInput:Labeled source data

Selection Bias Correction AlgorithmInput:Labeled source dataUnlabeled target data

Selection Bias Correction AlgorithmInput: Labeled source and unlabeled target data1) Label source instances as, target as

Selection Bias Correction AlgorithmInput: Labeled source and unlabeled target data1) Label source instances as2) Train predictor, target as

Selection Bias Correction AlgorithmInput: Labeled source and unlabeled target data1) Label source instances as2) Train predictor3) Reweight source instances, target as

Selection Bias Correction AlgorithmInput: Labeled source and unlabeled target data1)2)3)4)Label source instances asTrain predictorReweight source instancesTrain target predictor, target as

How Bias gets Corrected

Rates for Re-weighted LearningAdapted from Gretton et al.

Sample Selection Bias SummaryTwo Key AssumptionsAdvantageOptimal target predictorwithout labeled target data

Sample Selection Bias SummaryTwo Key AssumptionsAdvantageDisadvantage

Sample Selection Bias ferences/[1] J. Heckman. Sample Selection Bias as a Specification Error. 1979.[2] A. Gretton et al. Covariate Shift by Kernel Mean Matching. 2008.[3] C. Cortes et al. Sample Selection Bias Correction Theory. 2008[4] S. Bickel et al. Discriminative Learning Under Covariate Shift. 2009.

Tutorial Outline1. Notation and Common Concepts2. Semi-supervised Adaptation Covariate shiftLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

Unshared Support in the Real World.Running with ScissorsAvante Deep Fryer; BlackTitle: Horrible book, horrible.Title: lid does not work well.This book was horrible. I read half,I love the way the Tefal deep fryersuffering from a headache the entirecooks, however, I am returning mytime, and eventually i lit it on fire. 1second one due to a defective lidless copy in the world. Don't wasteclosure. The lid may close initially,your money. I wish i had the timebut after a few uses it no longerspent reading this book back. It wastedstays closed. I won’t be buying thismy lifeone again.?.?

Unshared Support in the Real World.Running with ScissorsAvante Deep Fryer; BlackTitle: Horrible book, horrible.Title: lid does not work well.This book was horrible. I read half,I love the way the Tefal deep fryercooks, however,I am returning myError increase: 13%26%suffering from a headache the entiretime, and eventually i lit it on fire. 1second one due to a defective lidless copy in the world. Don't wasteclosure. The lid may close initially,your money. I wish i had the timebut after a few uses it no longerspent reading this book back. It wastedstays closed. I won’t be buying thismy lifeone again.?.?

Linear Regression for Rating Predictionexcellent10010.1 1.5.-1.1 2 -0.3.0.5 10.0.3fascinatinggreat

Coupled SubspacesNo Shared SupportSingle Good Linear HypothesisStronger than

Coupled SubspacesNo Shared SupportSingle Good Linear HypothesisCoupled Representation Learning

Single Good Linear Hypothesis?Adaptation Squared .19

Single Good Linear Hypothesis?Adaptation Squared .381.191.23

Single Good Linear Hypothesis?Adaptation Squared .801.381.681.191.23

A bound on the adaptation errorWhat if a single good hypothesis exists?A better discrepancy than total variation?

A generalized discrepancy distanceMeasure how hypotheses make mistakesLinear, binary discrepancy region

A generalized discrepancy distanceMeasure how hypotheses make mistakeslowlowhigh

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in general

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in general

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in generalLow?

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in generalHigh?

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in generalHigh?Related to hypothesis classUnrelated to hypothesis class

Discrepancy vs. Total VariationDiscrepancyComputable from finite samples.Total VariationNot computable in generalHigh?Related to hypothesis classUnrelated to hypothesis classBickel covariate shift algorithm heuristically minimizes both measures

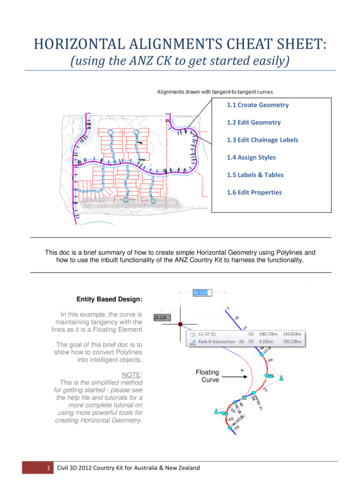

Is Discrepancy Intuitively Correct?4 domains: Books, DVDs, Electronics, KitchenShared VocabularyE&K: super easy, bad qualityB&D: fascinating, boring12Target Error RateB&D, E&KDK9BE,BKDE6BD3EK060708090Approximate Discrepancy100

A new adaptation bound

Representations and the BoundLinear Hypothesis Class:Hypothesis classes from projections:30.30.10011001

Representations and the BoundLinear Hypothesis Class:Hypothesis classes from projections:30.1001Goals for1) Minimize divergence2)

Learning Representations: PivotsSourceTargetfantastichighly recommendeddefectivesturdyleakinglike a charmfascinatingboringread halfcouldn’t put it downwaste of moneyhorrible

Predicting pivot word presenceDo not buyAn absolutely great purchase.A sturdy deep fryer?

Predicting pivot word presenceDo not buy the Shark portable steamer.The trigger mechanism is defective.An absolutely great purchase.A sturdy deep fryer?

Predicting pivot word presenceDo not buy the Shark portable steamer.The trigger mechanism is defective.An absolutely great purchase. . . . Thisblender is incredibly sturdy.Predict presence of pivot wordsgreatgreatgreatA sturdy deep fryer.?

Finding a shared sentiment subspacehighlyrecommendhighly recommend pivotshighlyrecommendgenerates N new featureshighlyrecommend recommend appear?” Sometimes predictors capturenon-sentiment information: “Did highlygreat

Finding a shared sentiment subspacehighlyrecommendhighly recommend pivotshighlyrecommendgenerates N new featureshighlyrecommend recommend appear?” Sometimes predictors capturenon-sentiment information: “Did highlyIgreat

Finding a shared sentiment subspacehighlyrecommendhighly recommend pivotshighlyrecommendgenerates N new featureshighlyrecommend recommend appear?” Sometimes predictors capturenon-sentiment information: “Did highlywonderfulgreat

Finding a shared sentiment subspacehighlyrecommend Letbe a basis for thesubspace of best fit tohighly recommend pivotshighlyrecommendgenerates N new featureshighlyrecommend recommend appear?” Sometimes predictors capturenon-sentiment information: “Did highlywonderfulgreat

Finding a shared sentiment subspacehighlyrecommend Letbe a basis for thesubspace of best fit to pivotshighlyrecommendgenerates N new featureshighlyrecommend recommend appear?” Sometimes predictors capturenon-sentiment information: “Did highlycaptures sentimentvariance in( highly recommend, great )

P projects onto shared subspaceSourceTarget

P projects onto shared subspaceSourceTarget

Correlating Pieces of the ourceHuber Loss0.003Target Error0.253

Correlating Pieces of the andom0.223SourceHuber Loss0.0030.254Target Error0.2530.561

Correlating Pieces of the andom0.223Coupled Projection0.211SourceHuber Loss0.0030.2540.07Target Error0.2530.5610.216

Target Accuracy: Kitchen Appliances8884807672BooksDVDsSource DomainElectronics

Target Accuracy: Kitchen Appliances8887.7%87.7%87.7%8480In Domain (Kitchen)7672BooksDVDsSource DomainElectronics

Target Accuracy: Kitchen Appliances8887.7%87.7%87.7%8484.0%80In Domain(Kitchen)No Adaptation7674.5%72Books74.0%DVDsSource DomainElectronics

Target Accuracy: Kitchen %7674.5%72Books74.0%DVDsSource DomainIn Domain (Kitchen)No AdaptationAdaptationElectronics

Adaptation Error 7636% reduction in error due to74.5%72Books74.0%DVDsSource DomainIn-domain (Kitchen)adaptationNo CorrespondenceCorrespondenceElectronics

Visualizing(books & kitchen)negativevs.positivebooksengagingplot # pagespoorly designedthe plasticpredictableawkward tofascinatingespressoare perfectleakingkitchenmust readgrishamyears nowa breeze

Representation ferences/[1] Blitzer et al. Domain Adaptation with Structural Correspondence Learning. 2006.[2] S. Ben-David et al. Analysis of Representations for Domain Adaptation. 2007.[3] J. Blitzer et al. Domain Adaptation for Sentiment Classification. 2008.[4] Y. Mansour et al. Domain Adaptation: Learning Bounds and Algorithms. 2009.

Tutorial Outline1. Notation and Common Concepts2. Semi-supervised Adaptation Covariate shiftLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

Feature-based approachesCell-phone domain:“horrible” is bad“small” is goodHotel domain:“horrible” is bad“small” is badKey Idea:Share some features (“horrible”)Don't share others (“small”)(and let an arbitrary learning algorithmdecide which are which)

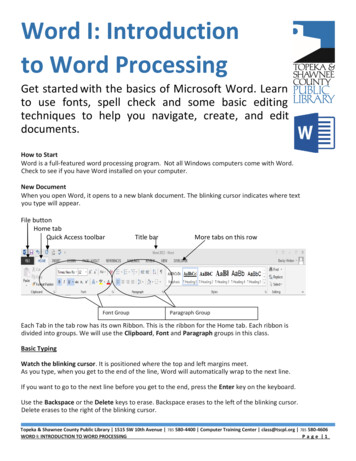

In feature-vector lingo:x Augmentation‹x, x, 0› (for source domain) Featurex ‹x, 0, x› (for target domain) AugmentedFeaturesOriginalFeaturesThe phone is smallW:theW:phoneW:isW:smallThe hotel is lT:theT:hotelT:isT:small

A Kernel PerspectiveIn feature-vector lingo:x ‹x, x, 0› (for source domain) x ‹x, 0, x› (for target domain) Kaug(x,z) 2K(x,z)K(x,z)if x,z from same domainotherwiseWe have ensuredSGH & destroyedshared support

Named Entity Rec.: n

Named Entity Rec.: p /the/PersonGeo-politicalentityOrganizationLocation

Some experimental resultsTaskDombnbcACEnwNERwlunctsCoNLLtgtPubMed br-cmbr-cnbr-cpbr-crTreebank- ly Baseline2.372.11 (pred)4.073.53 (weight)3.713.56 (pred)2.452.12 (all)2.462.10 (linint)0.460.40 (all)2.951.75 (wgt/li)4.153.95 (linint)3.823.44 (linint)4.354.30 (weight)4.154.09 (linint)6.274.72 (linint)5.364.15 (all)6.325.01 (prd/li)6.605.39 (wgt/prd)6.593.11 (all)5.564.19 (prd/li)5.624.55 (wgt/prd/li)9.135.15 (linint)5.754.72 905.415.734.894.424.786.304.65

Some Theory Can bound expected target error: Average training error1ˆ ˆ O(complexity ) t 2 st 1 1 1 O Odisc(S,T) H N Nst Number ofsource examplesNumber oftarget examples

Feature Hashing Feature augmentation creates (K 1)D parameters Too big if K 20, but very sparse!12 04 0 1Feat. Aug.1204 011204 01Hashing21 4411 3

Hash Kernels How much contamination between domains?Weights excludingdomain uPr w u ( x) 2eO ( 2 ) O (1/ m )Hash vector fordomain uTarget (low)dimensionality

Semi-sup Feature Augmentation For labeled data: (y, x) (y, ‹x, x, 0›) (y, x) (y, ‹x, 0, x›)(for source domain) (for target domain) What about unlabeled data? Encourage agreement: ht ( x) hs ( x) wt x ws x 0

Semi-sup Feature Augmentation For labeled data: (y, x) (y, ‹x, x, 0›) (y, x) (y, ‹x, 0, x›)(for source domain) (for target domain) What about unlabeled data? (x) { ( 1, ‹0, x, -x›) , (-1, ‹0, x, -x›) }Loss on veLoss on –veLoss on unlabeled Encourages agreement on unlabeled data Akin to multiview learning Reduces generalization bound

Feature-based References T. Evgeniou and M. Pontil. Regularized Multi-task Learning(2004). H. Daumé III, Frustratingly Easy Domain Adaptation. 2007. K. Weinberger, A. Dasgupta, J. Langford, A. Smola, J.Attenberg. Feature Hashing for Large Scale MultitaskLearning. 2009. A. Kumar, A. Saha and H. Daumé III, Frustratingly EasySemi-Supervised Domain Adaptation. 2010.

Tutorial Outline1. Notation and Common Concepts2. Semi-supervised Adaptation Covariate shiftLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

A Parameter-based Perspective Instead of duplicating features, write: wt ws vAnd regularize ws and vtoward zerowtws

Sharing Parameters via Bayes 0wyxNN data pointsw is regularized to zeroGiven x and w,we predict y

Sharing Parameters via Bayes 0wsyx Nswswtyx Nt Train model on sourcedomain, regularizedtoward zeroTrain model on targetdomain, regularizedtoward source domain

Sharing Parameters via Bayes 0 w0wswtyyx Nsx Nt Separate weights foreach domainEach regularizedtoward w0w0 regularizedtoward zero

Sharing02)w Nor(0,ρParametersvia0wk Nor(w0, σ2)Bayes Separate weights forNon-linearize:each domainw0w0 GP(0, K) Each regularizedwk GP(w0, K)toward w0wkyx Nk K Tasks:w0 regularizedClustertowardw0 Nor(0,σ2) zero2wk DP(Nor(w0, ρ ), α) Can derive “augment”as an approximation tothis model

Not all domains created equal Would like to infer treestructure automaticallyTree structure shouldbe good for the taskWant tosimultaneously infertree structure andparameters

Kingman’s Coalescent A standard model forthe genealogy of apopulationEach organism hasexactly one parent(haploid)Thus, the genealogy isa tree

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

A distribution over trees

Coalescent as a graphical model

Coalescent as a graphical model

Coalescent as a graphical model

Coalescent as a graphical model

Efficient Inference Construct trees in a bottom-up manner Greedy: At each step, pick optimal pair (maximizesjoint likelihood) and time to coalesce (branch length) Infer values of internal nodes by belief propagation

Message passing onNotall domainscreatedcoalescenttree; efficientlydone by belief propagationInference by EM:E: compute expectationsover weightsequal0w0M: maximize treestructurewkGreedy agglomerativeclustering algorithmyx Nk K

Data tree versus inferred tree

Some experimental results

Parameter-based References O. Chapelle and Z. Harchaoui. A machine learningapproach to conjoint analysis. NIPS, 2005. K. Yu, V. Tresp, and A. Schwaighofer. Learning Gaussianprocesses from multiple tasks. ICML, 2005. Y. Xue, X. Liao, L. Carin, and B. Krishnapuram. Multi-tasklearning for classication with Dirichlet process priors.JMLR, 2007. H. Daumé III. Bayesian Multitask Learning with LatentHierarchies. UAI 2009.

Tutorial Outline1. Notation and Common Concepts2. Semi-supervised Adaptation Covariate shiftLearning Shared Representations3. Supervised Adaptation Feature-Based ApproachesParameter-Based Approaches4. Open Questions and Uncovered Algorithms

Theory and PracticeHypothesis classes from projections:30.10011) Minimize divergence2)

Open QuestionsMatching Theory and PracticeTheory does not exactly suggest what practitioners doPrior KnowledgeF2-F1/i/Speech Recognition/e//i//e/F1-F0Cross-LingualGrammar Projection

More Semi-supervised ferences/Self-training and Co-training[1] D. McClosky et al. Reranking and Self-Training for Parser Adaptation. 2006.[2] K. Sagae & J. Tsuji. Dependency Parsing and Domain Adaptation with LRModels and Parser Ensembles. 2007.Structured Representation Learning[3] F. Huang and A. Yates. Distributional Representations for Handling Sparsity inSupervised Sequence Labeling. 2009.

What is a domain anyway? Time? News the day I was born vs news today? News yesterday vs news today? Space? News back home vs news in Haifa? News in Tel Aviv vs news in Haifa?Suggest acontinuousstructure Do my data even come with a domain specified?Stream of x,y,d datawith y and d sometimeshidden?

We’re all domains: personalization adapt learn across millionsof “domains”? share enough information tobe useful? share little enoughinformation to be safe? avoid negative transfer? avoid DAAM (domainadaptation spam)?

ThanksQuestions?

Running with Scissors Title: Horrible book, horrible. This book was horrible. I read half, suffering from a headache the entire time, and eventually i lit it on fire. 1 less copy in the world. Don't waste your money. I wish i had the time spent r